Google's June 2019 Core Update - Full Analysis Of Over 2,100,000 Pages

- by Eric Lancheres June 28th, 2019

After seeing all the coverage on the June 2019 Google Core algorithm update, I was thoroughly impressed by the other SEOs in the industry...

I never knew people could write so much without saying anything of value.

So I won't bore you with the details you already know,

"Yes, it was rolled out from June 3rd to June 8th"

"Yes, Dailymail and CCN were affected and made a lot of noise about it"

Good.

Now that I've summed up all the previous coverage on the June Core update, we're ready to jump into real data that your SEO team hasn't told you yet and that John Mueller won't be recommending on Twitter.

Preamble

My analysis combines data from multiple sources (both external and in-house) uses a wide array of tools (custom and third party) to process it. If you would like to submit additional data or request data be removed, please contact me.

Any Google Search employees that would like to submit corrections or refute any information here are also welcome to contact me.

For the June 2019 update, I used:

- Over 2,100,000 search results

- Over 3400 individual keywords

- Over 290 factors

- Detailed overview of 62 Google Analytics accounts.

And of course, the user submitted sites, traffic graphs and testimonials from our community. (thanks!)

While the raw data is irrefutable, it is important to note that correlation does not imply causation. The objective is to use the data to support or disprove theories about the June 2019 update.

All of this so you can gain a competitive advantage on Google moving forward and recover your site if you were negatively affected.

Unlike Previous Updates

This latest June update is drastically different from it's predecessors. While there are some on-going trends, the focus was completely different this time around.

This is in sharp contrast with the March 2019 Core algorithm update and the August 2018 update which were more of an evolution of each other. As such, we aren't seeing that many reversals and many people that were never affected by updates have seen traffic variations occur.

This is important because if you're diagnosing a traffic drop on a client's website (or your own), depending on when the drop occurred, you're going to want to follow drastically different recovery steps.

- The update officially rolled out on June 3rd 2019 and completed on June 8th.

- Google announced the update before it rolled out, hinting at the fact that this might be a significant update.

- Many websites previously unnaffected by Google updates saw changes in search engine traffic.

The Damage

The obvious first place to start with the June 2019 algorithm change is what it impacted:

Did it target specific pages or entire websites?

As many have noticed, the update seems to have affected entire domains as keywords and pages across entire domains (and even sub-domains) dropped shortly after the update.

This means that you shouldn't be focused on a single page and instead, seek patterns that are repeated throughout the entire site.

Ahrefs organic traffic of www.karissasvegankitchen.com

Ahrefs organic traffic of www.karissasvegankitchen.com

What's interesting about this update is that there seems to be a link between domains and sub-domain. Here we have the Blurbusters main site and forum (two excellent sites) that both feel the impact from the June algorithm update.

While these sub-domains could be considered two entirely different websites, one forum and one article site, Google lumps them up together.

This hints at the fact that during the June update, Google was looking at much more than the theme and words on the page (because both sub-domains use drastically different themes & content management systems) and has focused on a metric that affects entire domains.

Similar Sites - Different Results

One of the best ways to uncover changes is to look at very similar sites that have been impacted in different ways.

Here we have two websites, with nearly IDENTICAL themes, both competing for the term celery juice. This is a coincidence as both sites are completely independent and owned by different people.

One site saw an increase while the other saw a significant drop.

Why is it that two sites built on the Genesis Framework for WordPress which have very similar content, advertisement...

Could have such drastically different reactions to the June algorithm update?

Source: Ahrefs organic traffic chart comparing www.karissasvegankitchen.com and www.cleaneatingkitchen.com

Those weren't the only similar sites experiencing different traffic changes.

Let's take the most famous site of the June 2019 update: Dailymail.co.uk

While many critics will immediately jump on the bandwagon by saying: "You have too many ads!" and produce a poor user experience, I compared it with a very similar property, Metro.co.uk

Let's compare both DailyMail.Co.Uk and Metro.Co.Uk sites:

Metro.co.uk Vs DailyMail.co.uk

Source: Screencapture comparing dailymail.co.uk and metro.co.uk ads

Source: Screencapture comparing dailymail.co.uk and metro.co.uk ads

Source: Ahrefs organic traffic chart comparing dailymail.co.uk and metro.co.uk

Source: Ahrefs organic traffic chart comparing dailymail.co.uk and metro.co.uk

Let's look at the similarities:

-

- Both sites have 5 ads above the fold (DailyMail.co.uk and Metro.co.uk)

- Both sites have similar layouts

- Both are covering the news and appear (at first glance) to have similar content

Yet one site dropped in traffic while the other one increased!

This tells us that it's likely not the layout, nor the quantity of ads, nor the topics that are being affected by Google's latest June update.

While both sites could certainly improve in each area (seriously, my screen doesn't need 5 ads at a time), if that was the culprit in the June 2019 update, Metro.co.uk would have likely seen a drop as well.

Process Of Elimination

The June 2019 update makes it quite difficult to predict if a site will be hit or not by looking exclusively at the content. We've seen that similar looking sites have been impacted differently by the June 2019 update which likely means:

-

- It's NOT related to the user experience (similar sites would likely have a similar user experience)

- It's NOT related to better written content (all sites reviewed had comparable titles, sub-headlines, LSI content)

- It's NOT related to the layout, the framework, nor the quantity of ads on a page.

- It's NOT related to the speed all sites loaded within similar time frames.

And of course, all sites reviewed had decent engagement, social presence and traffic.

That leaves one thing:

Links.

The Trust Metric

The big change that occurred during the June Core 2019 update was related to links, and more specifically, domain authority.

Google changed the way it evaluates certain links AND significantly increased the importance of domain authority with regards to ranking within the search engine.

Before I dive into the data, can you guess which site was affected by the recent update?

Source: Ahrefs traffic chart of Google results for "celery juice"

Source: Ahrefs traffic chart of Google results for "celery juice"

For health related terms, there has been a significant shift in priority for content from high authority domains. In fact, according to Ahrefs, most domains ranking for celery juice have a domain authority of DR 80+ and those that had a lower authority were likely to see a drop in rankings.

Google recent shift in the way they evaluate domain authority means that ALL the metric services will have some catching up to do with the new algorithm.

Let me be clear:

Metric tools such as Ahrefs, Moz, Majestic, SEMRush (and others) model their algorithm to reflect how Google evaluates links. When Google changes the way they evaluate links (increasing the importance of certain elements, ignoring some other links, etc), that means that these services must update themselves in order to maintain the best accuracy.

Historically, these services struggled to account for spam which meant that we had wildly inaccurate metrics if a site was hit by SENuke, Xrumer or GSA. The results was that in spite of a site not ranking on Google, the metric services would assign it a high score.

Fortunately, most metric services have significantly improved their ability to detect and ignore spam links in order to produce results that are more aligned with Google rankings. While they aren't perfect, they have certainly come a long way.

With this new algorithm update, Google has changed the way the domain authority metric is calculated by refining their link scoring system.

Link Changes

While it makes sense that Google would increase the importance of links in order to combat misleading and potentially dangerous content online, some false content can be very popular.

As such, Google focused on more than just raw link count and instead, focused on links that convey trust and authority.

That's why you can have two very similar sites, with similar content and have drastically different outcomes.

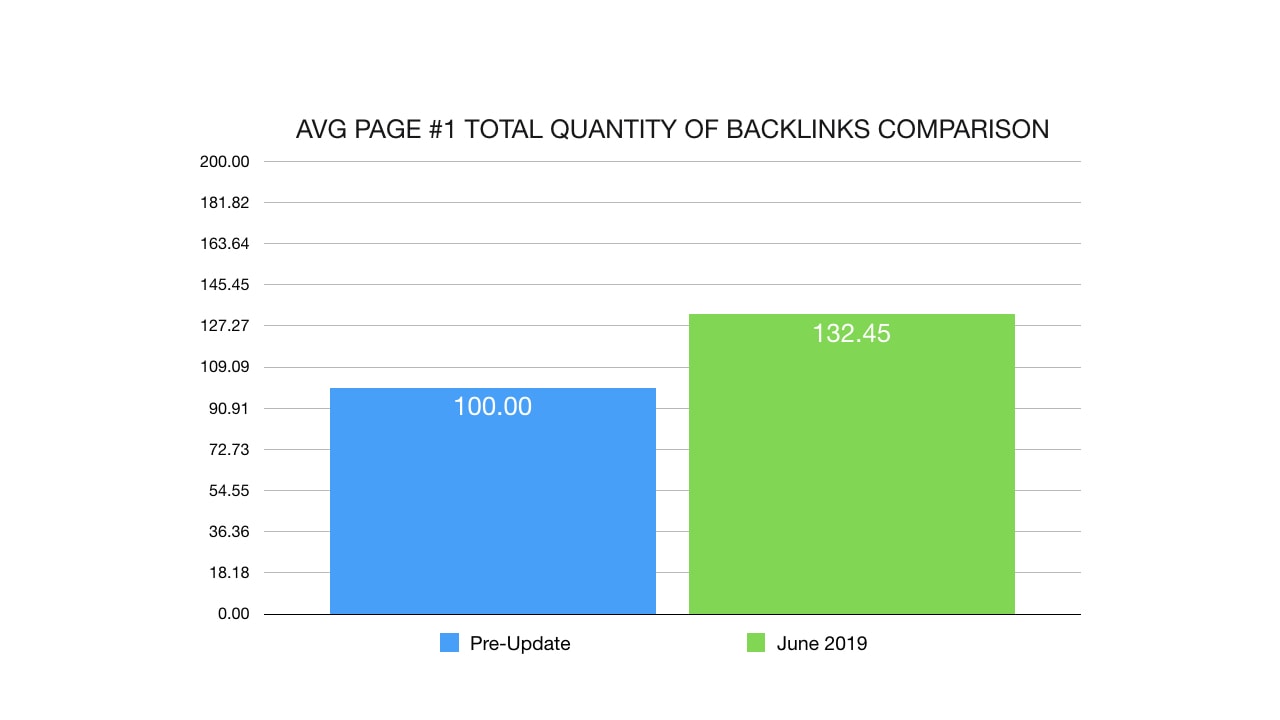

Starting with the backlinks, we can clearly see that the results on the first page of Google have significantly more links post-June 2019.

As we can see, results on page 1 have 32% more raw backlinks after the update.

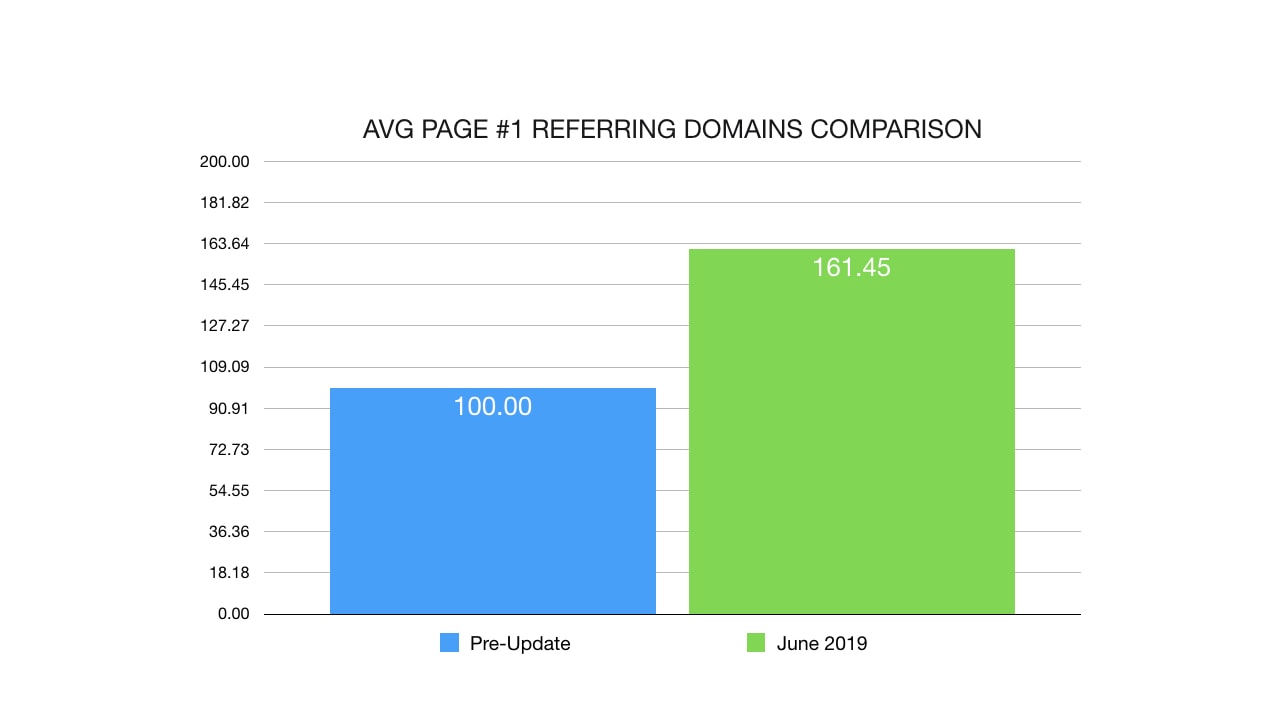

An interesting changed is that the quantity of referring domains increased by a huge 61%. That's a considerable change!

This means that you'll see better results if you get links from multiple domains rather than the same amount of links from a single domain.

Do-Follow Vs No-Follow

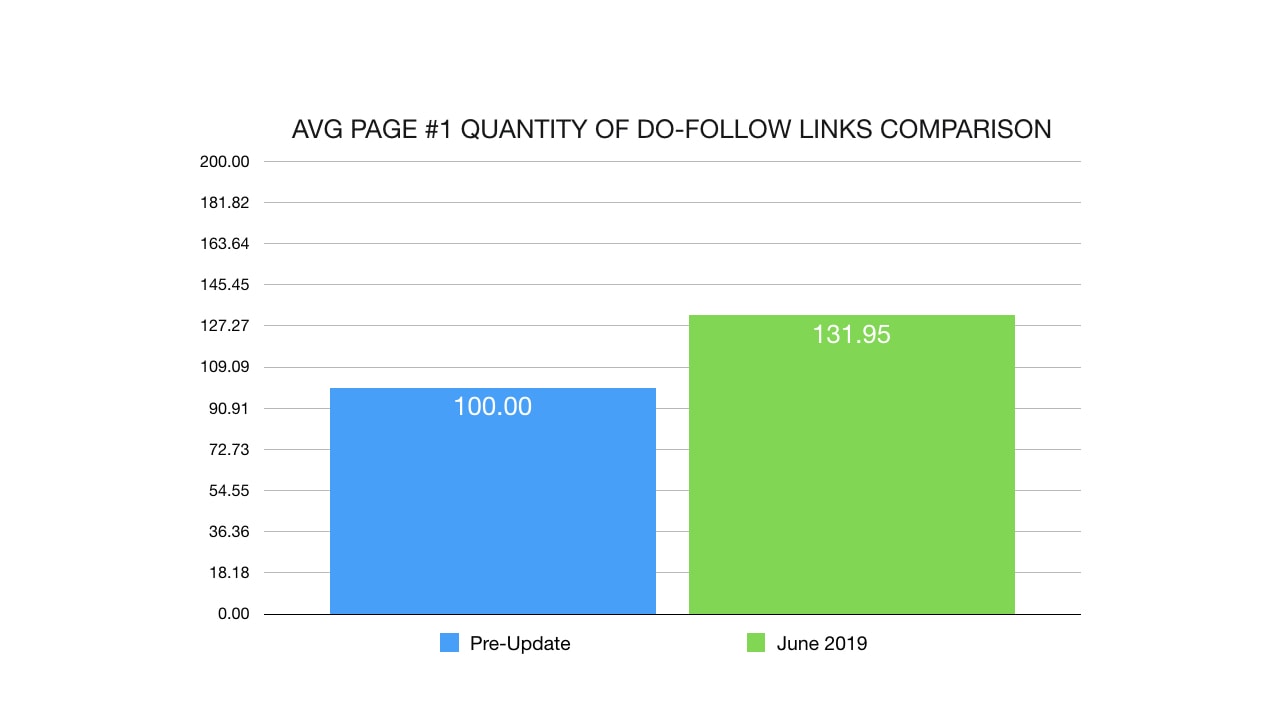

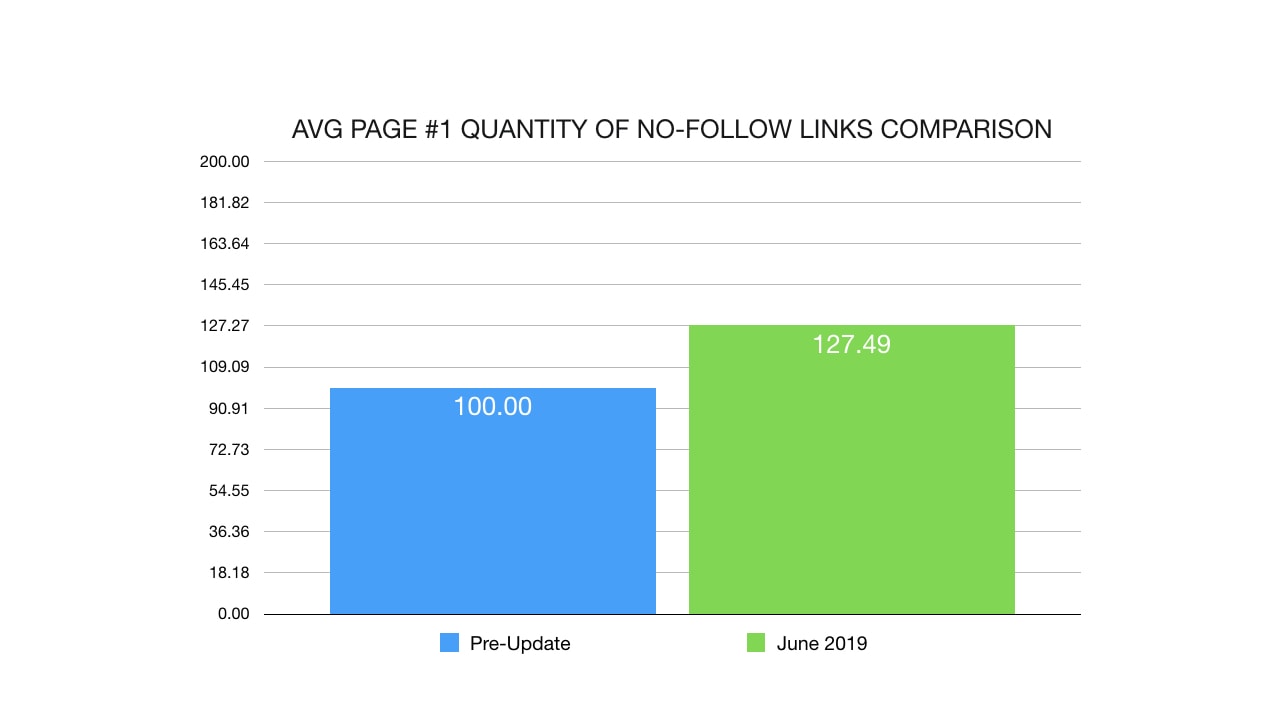

Diving deeper into the type of link, we evaluate both do-follow and no-follow links changes.

The avg page 1 domain contains 31% more do-follow links which is in line with the total backlink increase we observed.

And the quantity of no-follow links increased as well, by a smaller, yet still substantial, 27%. In summary, the domains that are ranking on page #1 of Google now have more links.

Link Types

Diving deeper into the types of links that Google started to favor, I looked at anchor text to see if there were any differences between the types of anchors that were preferred.

Were there more brand links?

Direct anchor links?

Keyword related links?

Generic links?

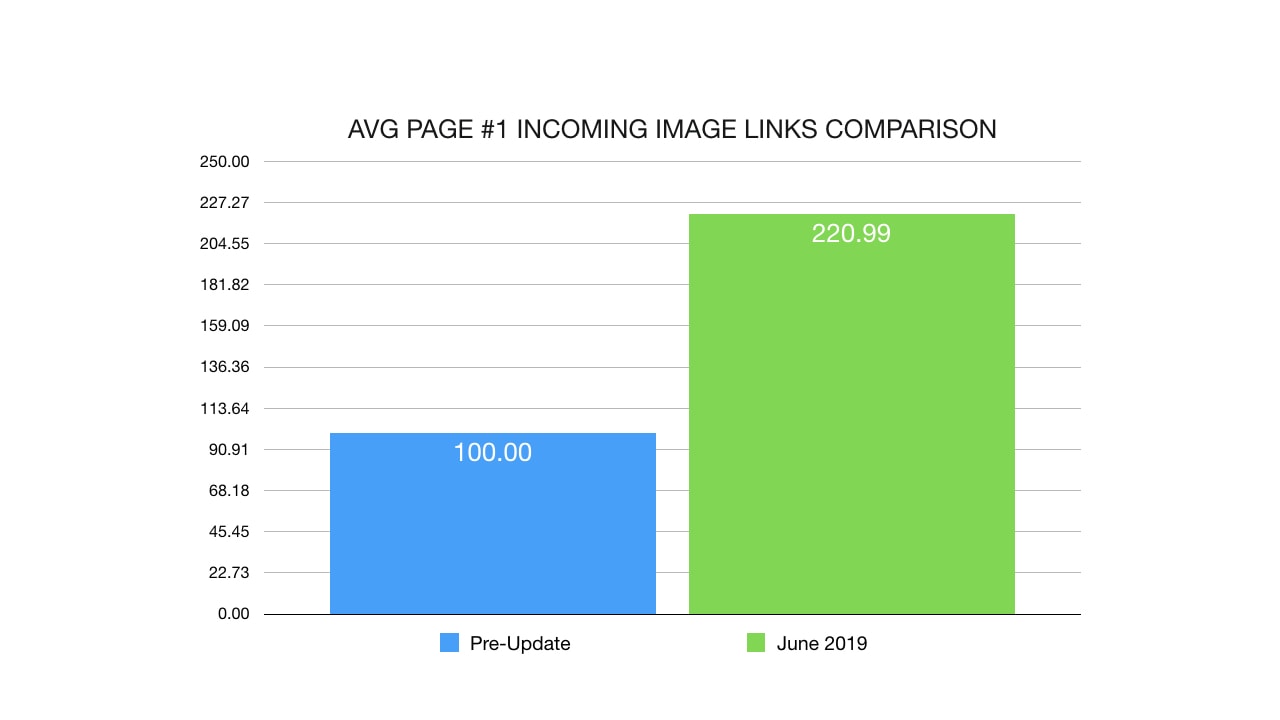

While there was no immediate difference, I did notice ONE type of anchor that experienced a significant increase... and to my surprise, there was no anchor at all!

Instead, I found that image links saw a significant change.

While this might be a coincidence (perhaps sites receiving image ads links or sponsor images have seen an increase), I saw a major upshift (over 120%) in sites with incoming image links.

Once again, I'd like to point out that correlation does not imply causation. Just because many of the "winning" sites have incoming image links doesn't mean that if you build a few image links, you'll experience the same results.

Link Location

The June Core algorithm update by Google seems to have been focused around domain authority. While the number of raw links increased, we know that Google is focused on link quality.

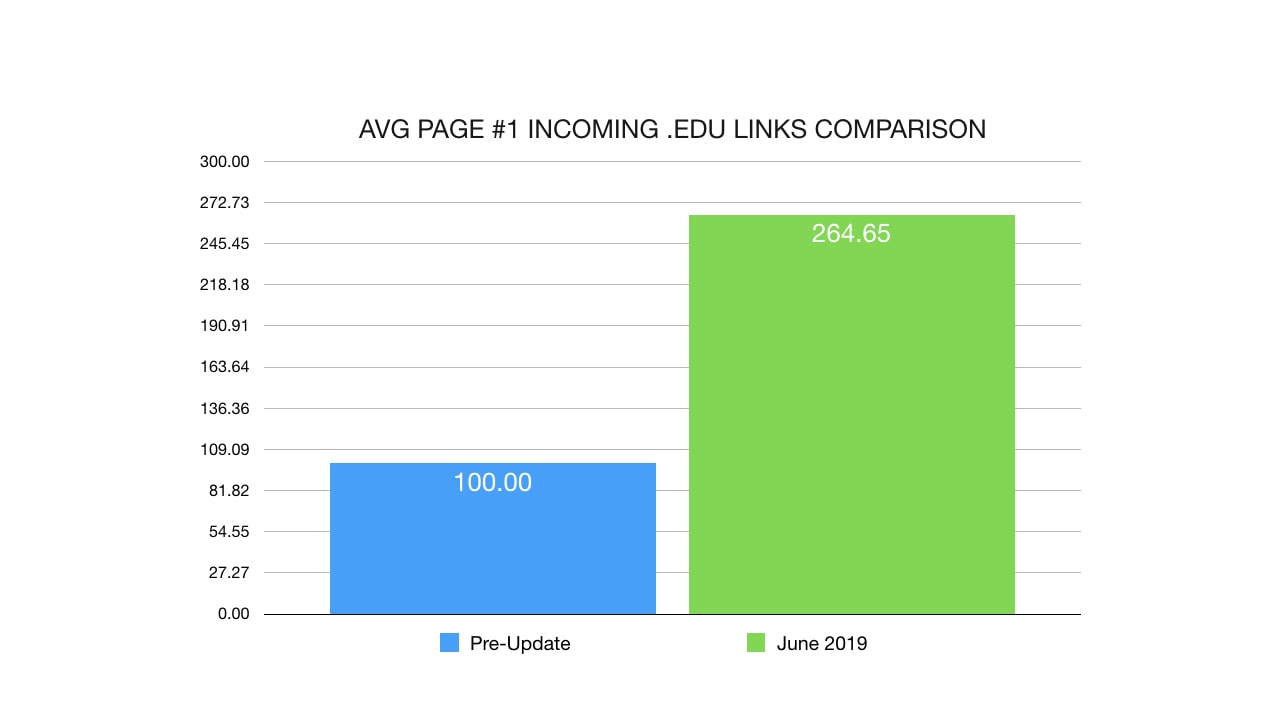

That's why I was surprised (and not surprised at the same time) to see this massive change:

An increase of 164% more .edu links pointing to domains ranking on page #1 of Google. It makes sense that when Google is looking for trusted, authoritative sources of links, educational and scientific institutes would be at the top of the list.

An increase of 164% more .edu links pointing to domains ranking on page #1 of Google. It makes sense that when Google is looking for trusted, authoritative sources of links, educational and scientific institutes would be at the top of the list.

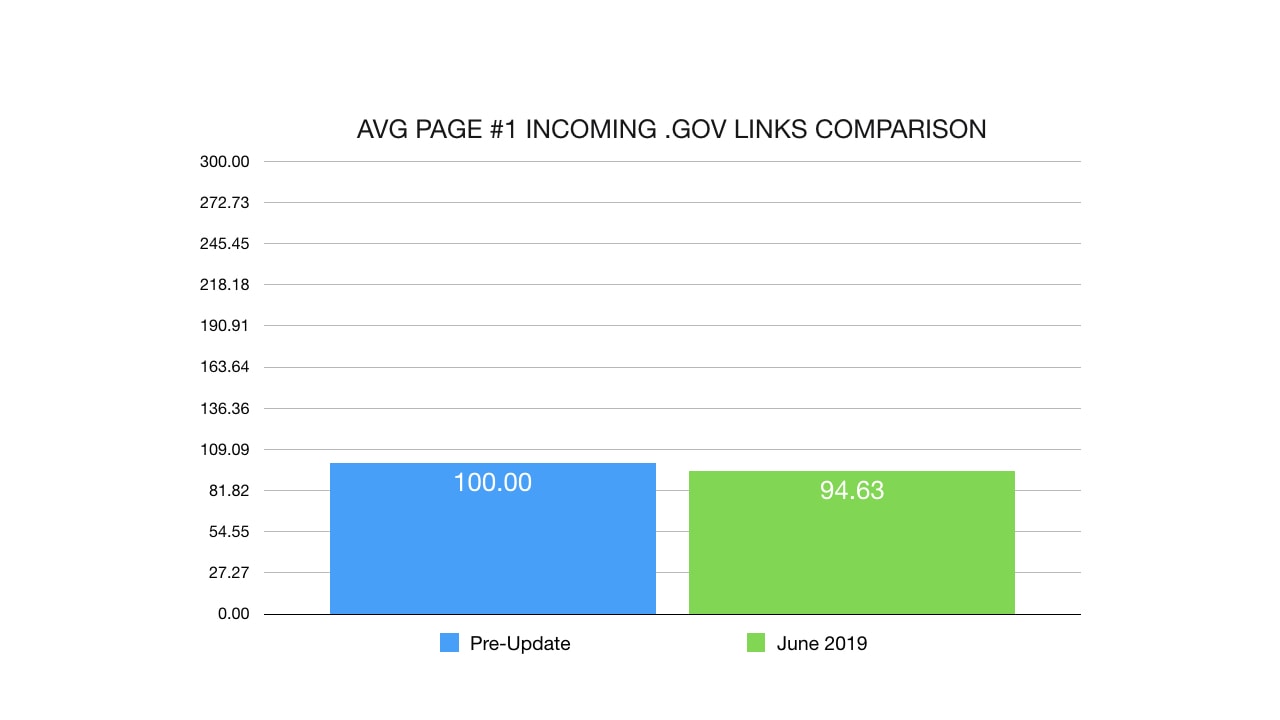

If you recall during the March Core update, we saw an increase in .Gov links and it seems as if Google toned it down slightly (likely just refining the values) in the June update.

While the effect of .gov links seems to have diminished ever-so-slightly, remember that they had previously increased by a substantial amount (148%) in March 2019 which means that they are still quite valuable. Don't let this single graph mislead you, we still have a 142% increase in .gov links compared to 2018.

Internal Links

After seeing all the backlink changes, I was curious to dive into site structure and internal links. Here's what I found:

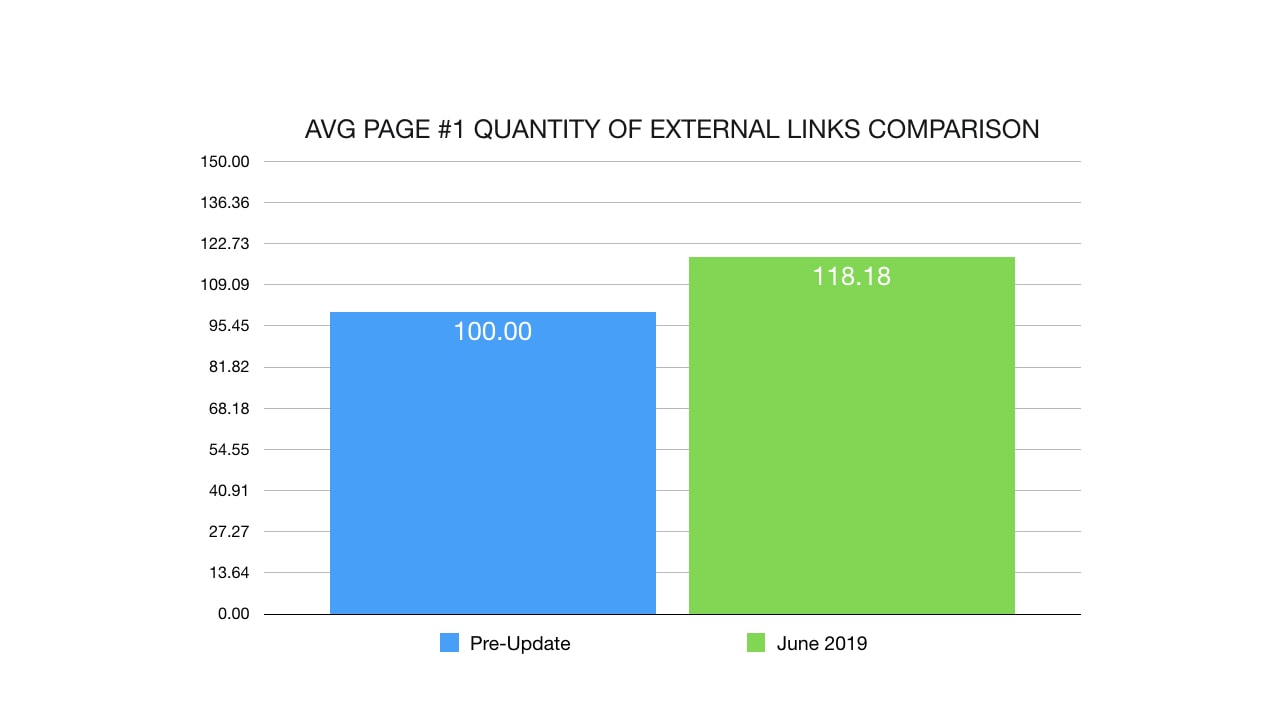

There was an increase of 18% in external links on the pages ranking on page #1 of Google. This usually indicates that the pages ranking are listing more references & sources, commonly found in authoritative content.

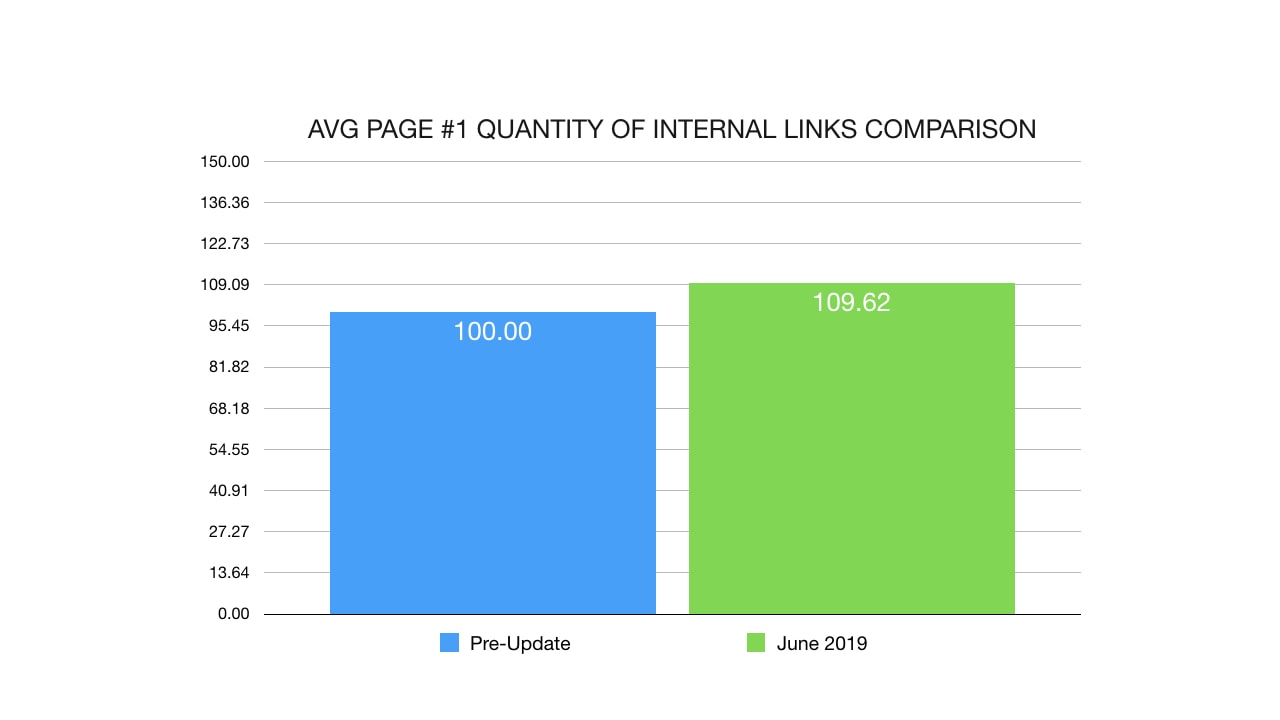

I also noticed a slight increase in internal links on the sites ranking on page #1 which might be associated with better link flow throughout the site.

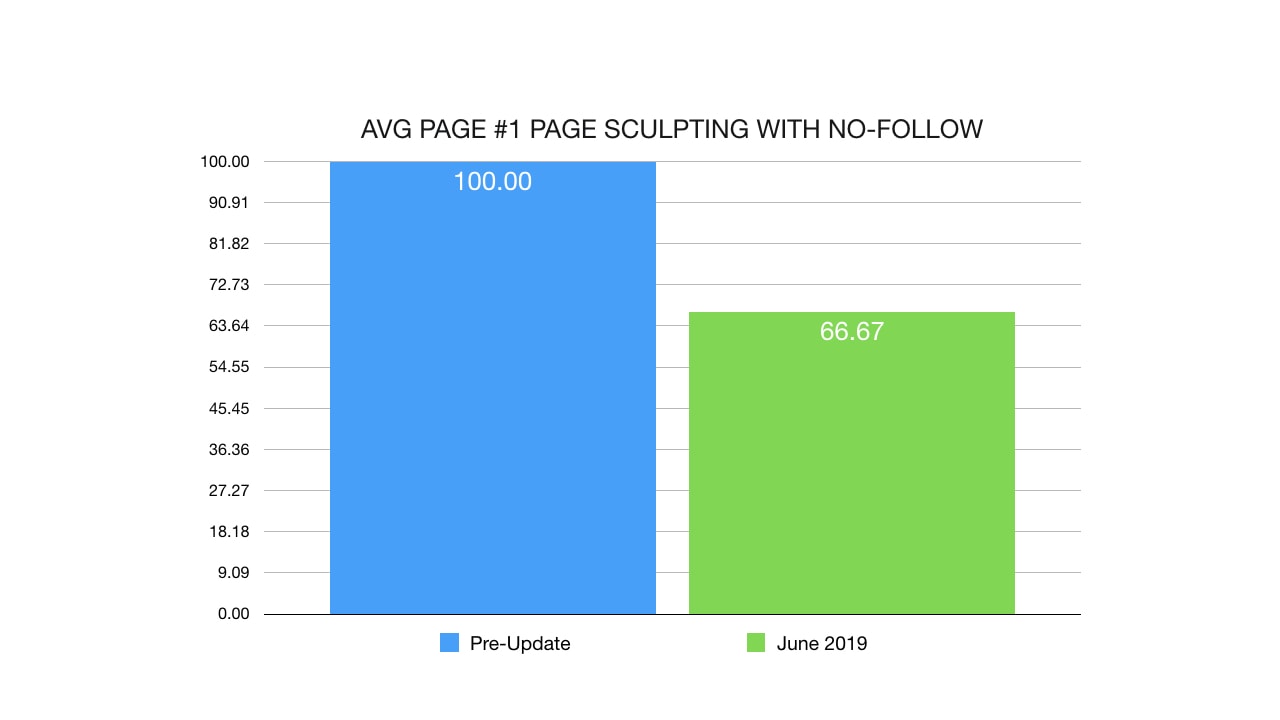

Last, but not least, I discovered an interesting trend that seemed to have impacted people no-following a large portion of their site.

One theory is that sites have been using no-follow to cover up large portions of thin / problematic content. Another theory is that sites with more user generated content have more no-follow internal links.

In both cases, I'm not saying that no-following internal links will cause drops in rankings however it is a trend that has been observed in the sites that were negatively impacted by the June 2019 Core update.

Personally I use do-follow for nearly all my internal links. My thinking is that if you don't trust your own content (and consequently, no-following internal content), then why should Google trust your content?

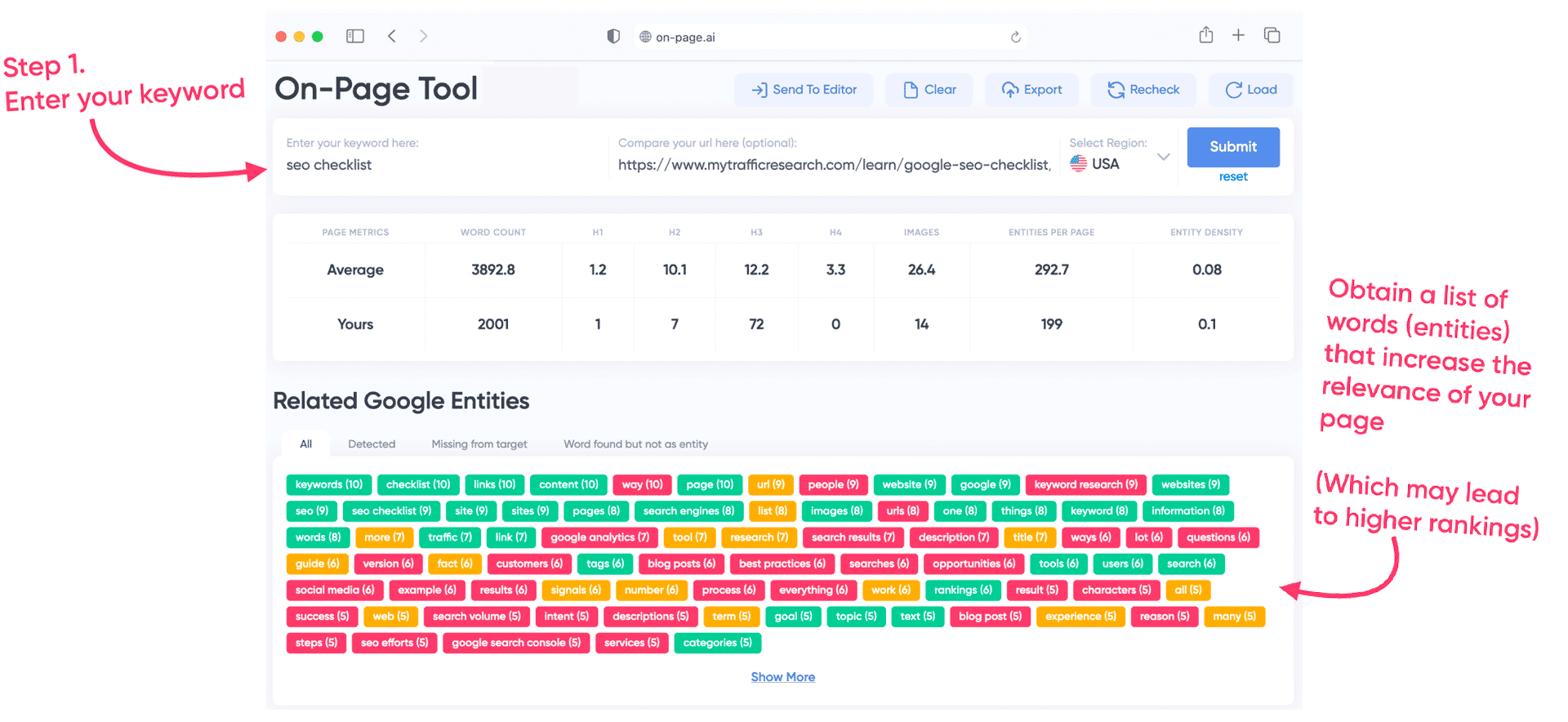

2019 On-Page Changes

When we compared similar sites, we noticed that on-page content didn't seem to be the deciding factor when it came to huge upswings or downswings... but does that mean that on-page didn't change at all?

Not quite.

While we didn't see a drastic change in the content appearing on page #1 of Google, some minor differences inevitably occurred.

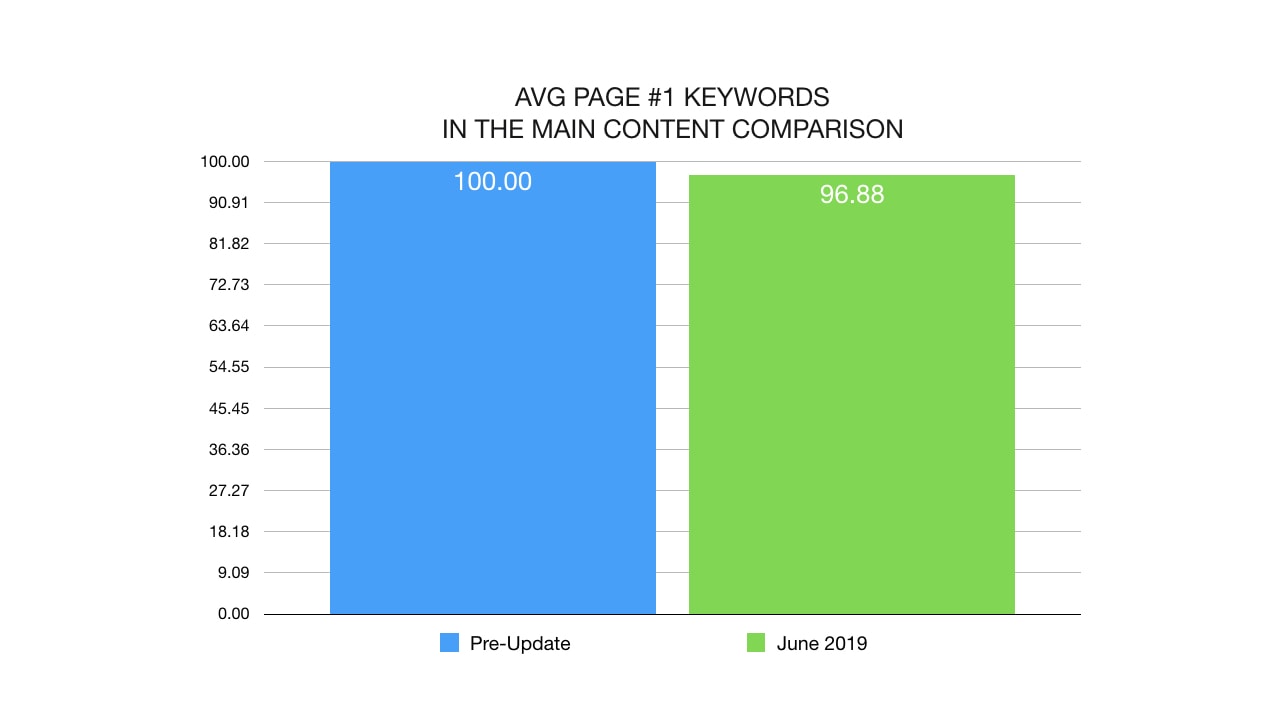

We saw slightly less keywords on the pages ranking on page #1 of Google. This makes sense because of the increase in weight of domain authority.

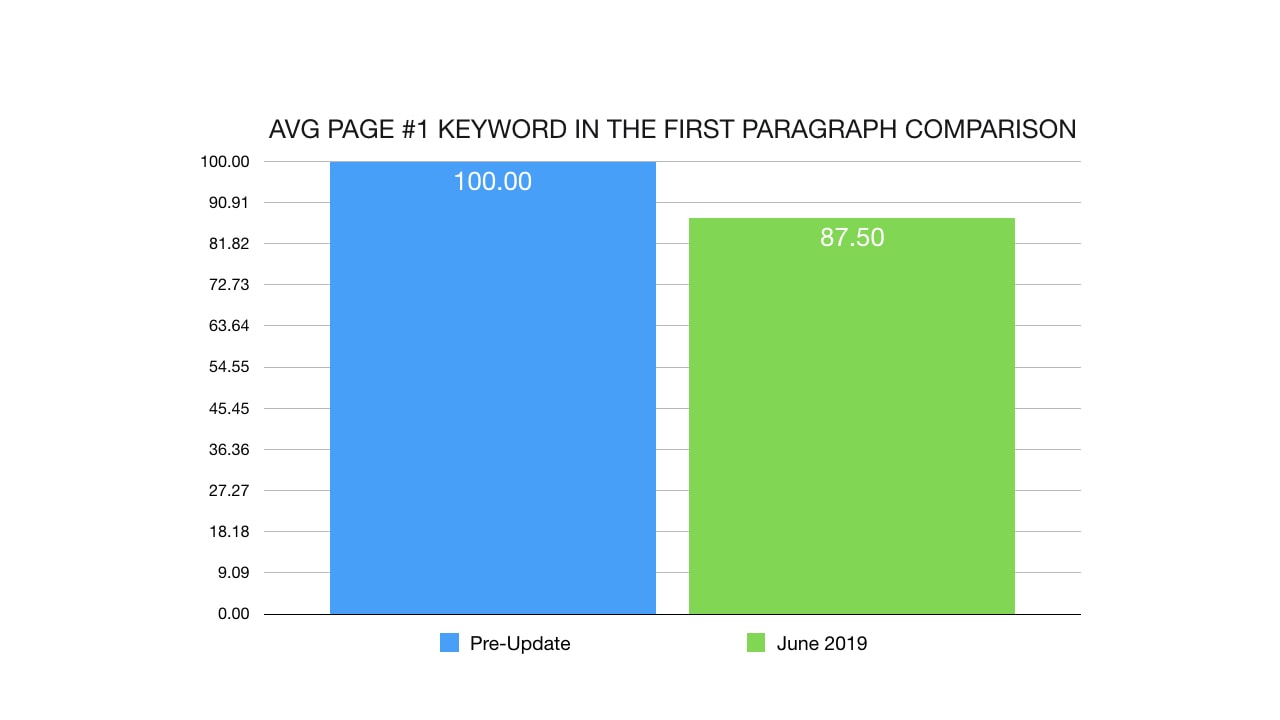

The increase in domain authority also meant that we saw less keywords (12.5%) in the first paragraph of articles on page #1. I personally don't think this was intentional and instead, another side effect of increase the weight of links.

What Didn't Change

While we saw slight side effects of links on keyword optimization, there are other metrics that didn't budge at all. Here's some data suggesting that Google didn't significantly change the way it evaluates on-page content.

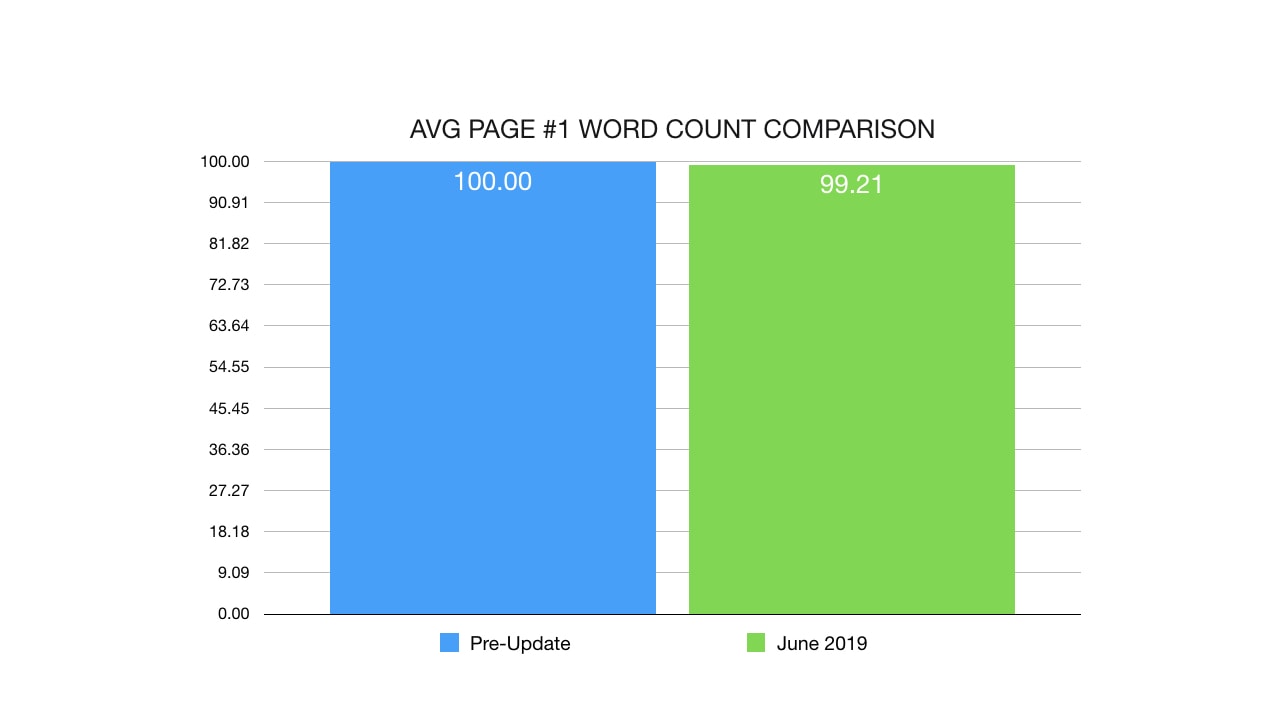

The average word count of content ranking on page #1 remained essentially the same.

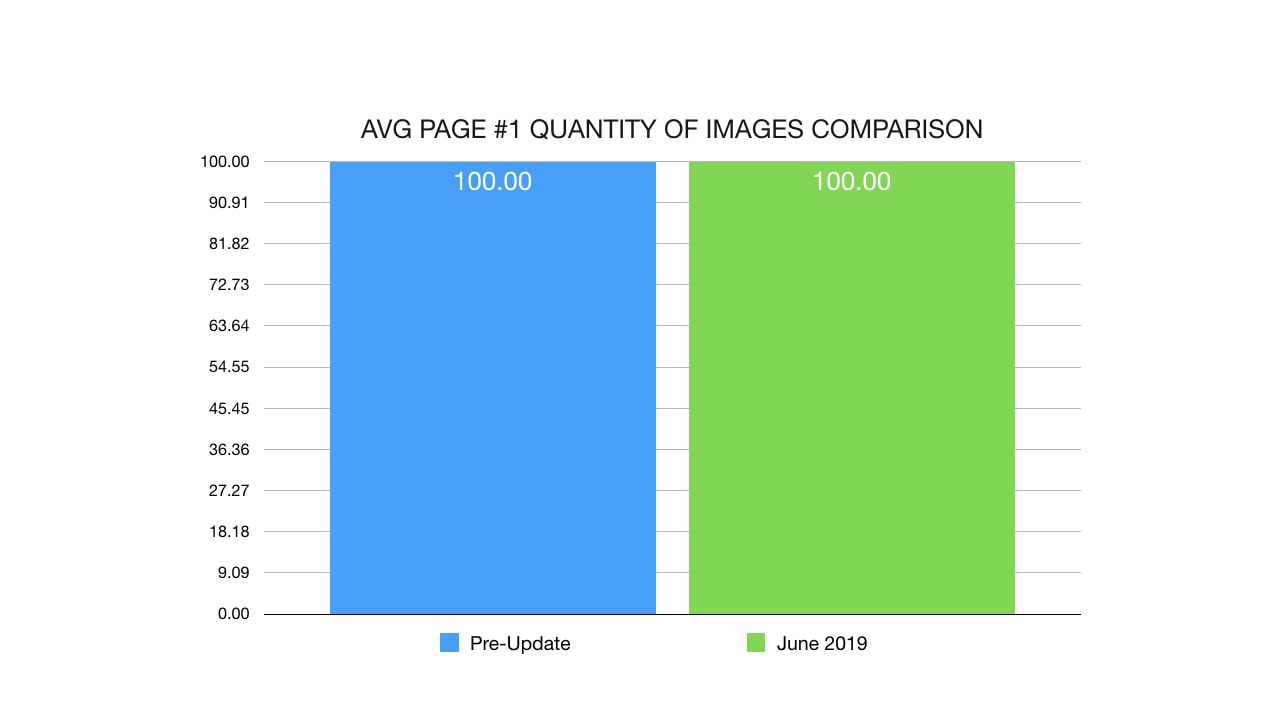

So did the quantity of images on a single page. Nothing changed here.

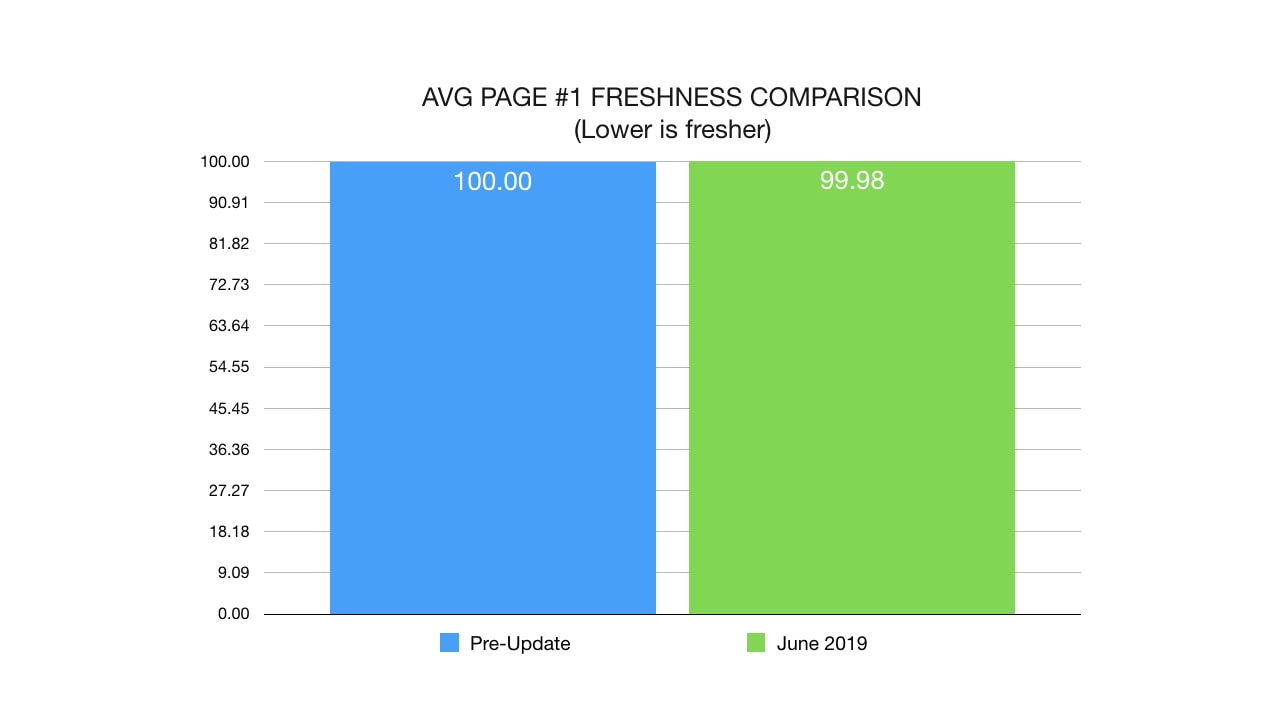

Nor did Google suddenly decide to change it's preference for freshness.

While it's clear that good content can be a leading force in getting good links (and consequently increasing in rankings), it appears as if from an algorithmic perspective, the key to recovering to the June 2019 Core update is not to re-write all your content, nor is it to update the dates.

Make no mistake though, if I'm helping you recover from an update and I spot a problem with the content that is preventing you from gaining authority links, that will be the FIRST thing I correct.

Ranking Factor Trends

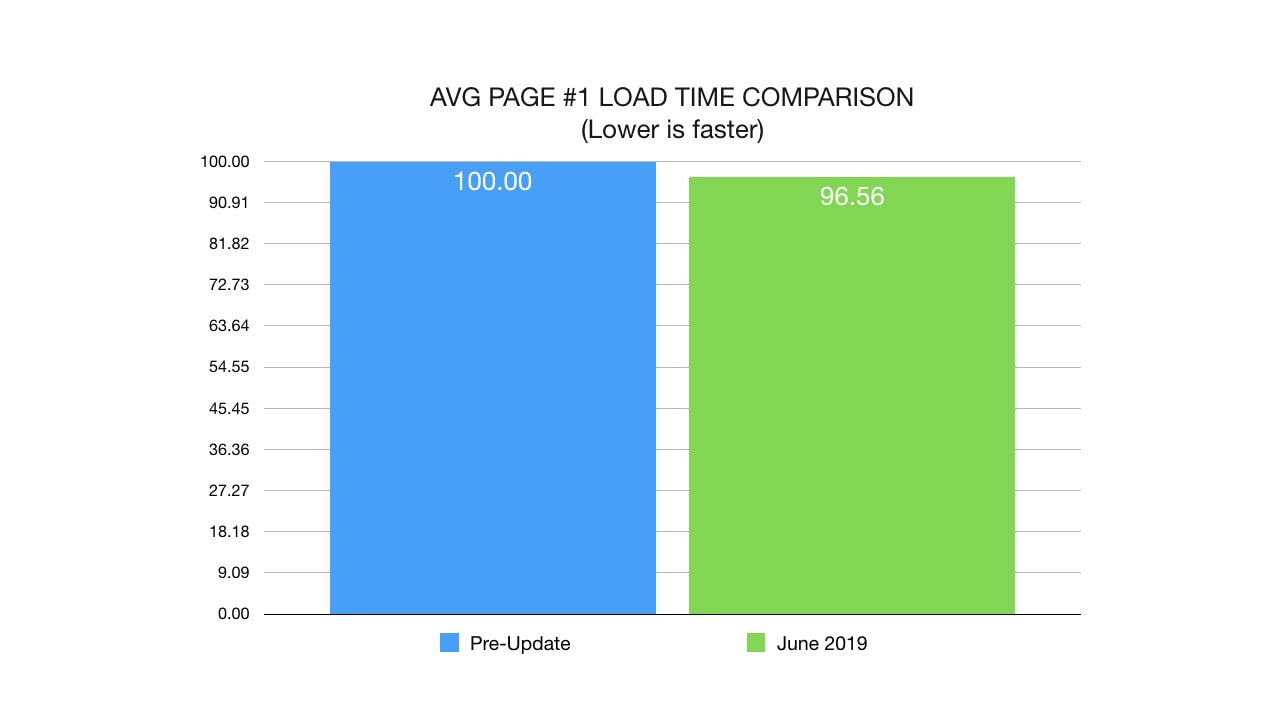

We did, however, see a continued decrease in server load speed. With each update, websites are loading faster and faster on page 1.

Not by much (3.5%) ... but if you consider that we saw a similar decrease in both the August and March updates, those add up to quite a bit.

March Core Algorithm Update: 2%

June Core Algorithm Update: 3.5%

Compared to just last year, the average page #1 result now loads 5.5% faster.

If you're still trying to rank with a slow server, you're making it harder on yourself.

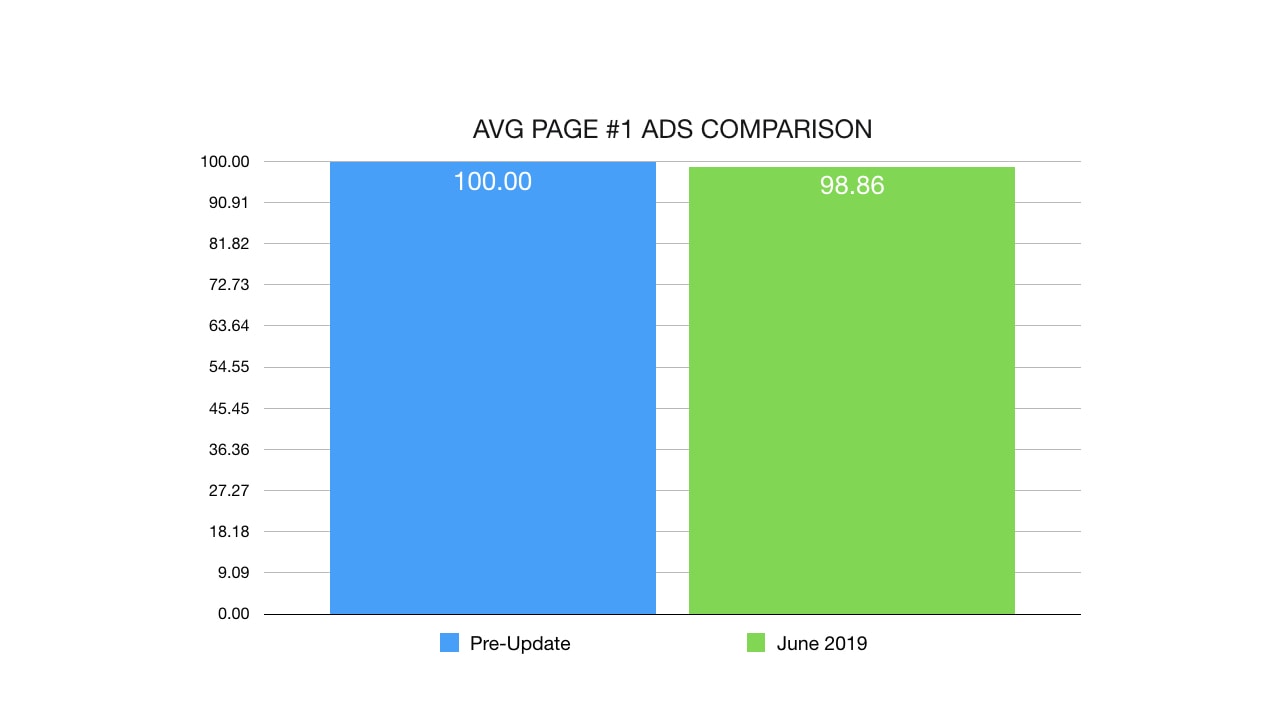

Another trend that we noticed is that pages with less advertisement have performed slightly better. While this is definitely not the major culprit of this update (as we pointed out in our similar domain comparison), it's worth noting.

Once again, this is a very slight change that might or might not be intentional. Perhaps more authoritative domains just happen to have less ads and this is just a consequence of the update.

Notable Surprises With HTML Tags

In terms of the code found on the page, we found slight differences which might or might not be direct changes made by Google.

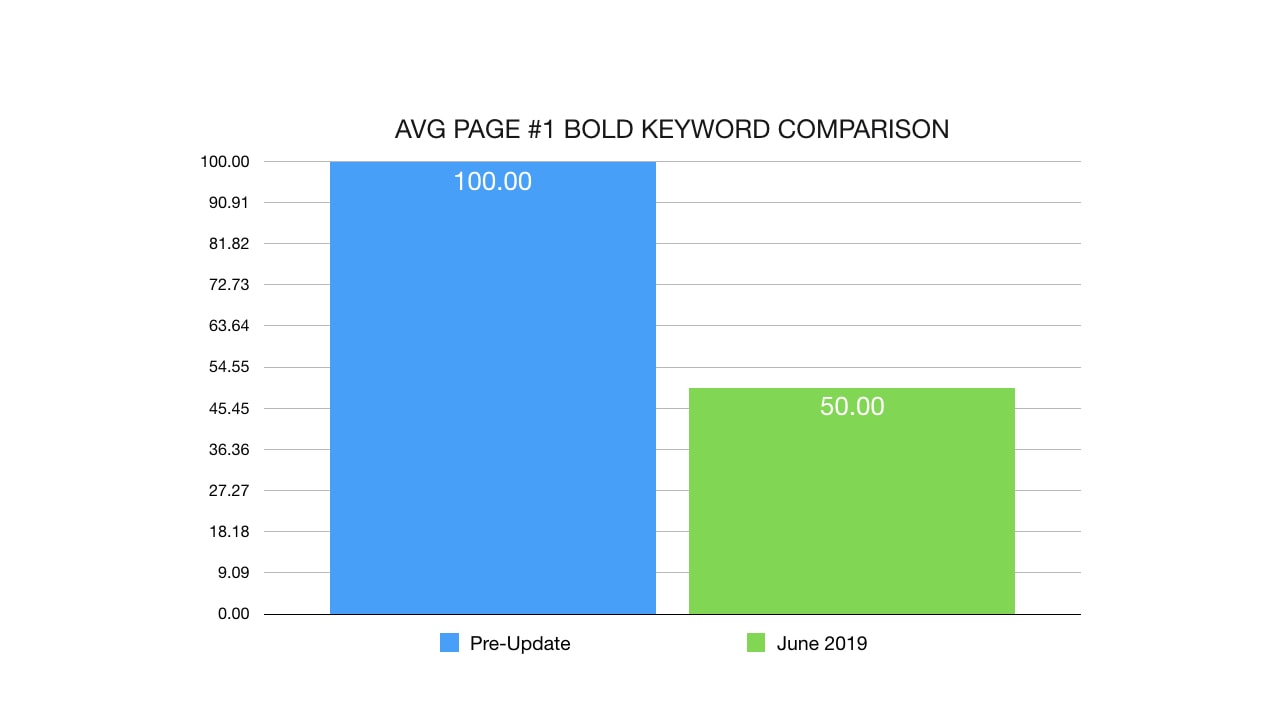

The quantity of keywords in bold found on page #1 of Google diminished. This might be a side effect of increasing the domain authority (do authoritative sites bold keywords?) or perhaps it was an intended change by Google.

While the drop might seem massive, this is because of the fact that there aren't many websites still bolding their keyword so a relatively small drop appears bigger than it actually is.

Comparatively, italicized keywords didn't change at all (but then again, who italicizes their keyword on purpose?).

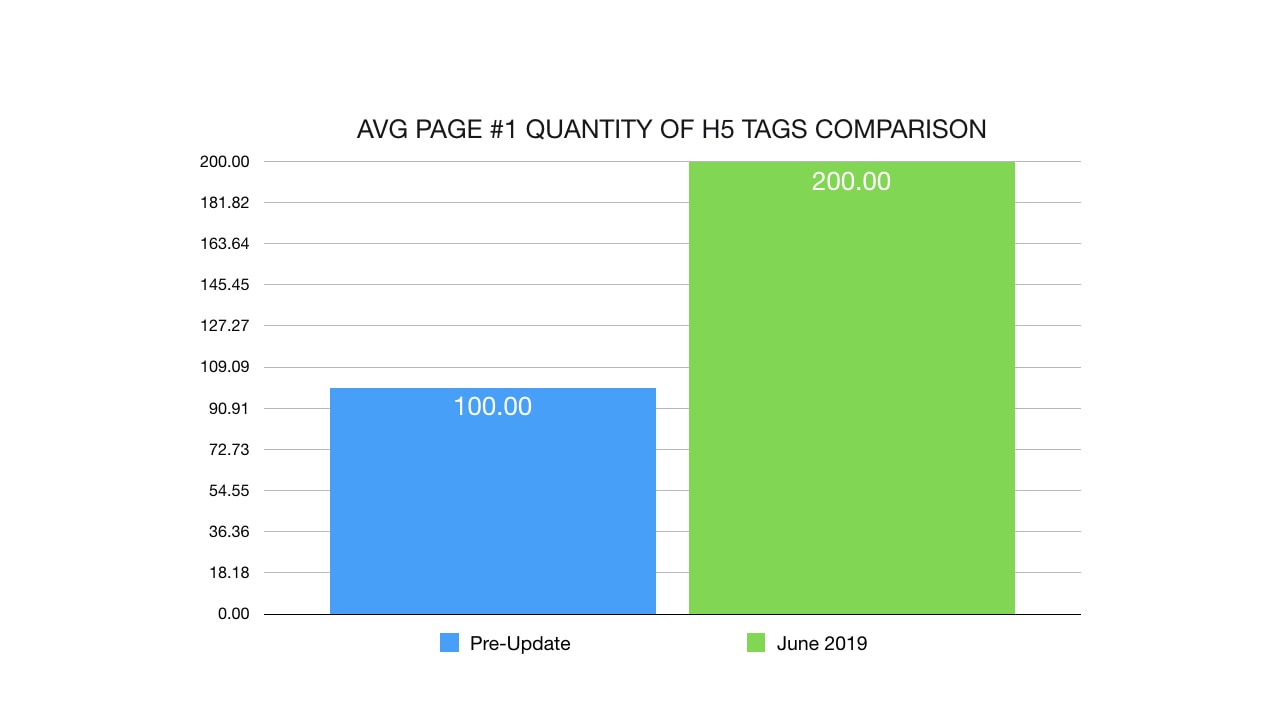

While the quantity of sub-headlines (H1,H2,H3,H4) all remained the same, I did find one anomaly with regards to H5.

In the past, I noticed that the H5 tag did not influence results at all on Google. Perhaps this is a new change indicating that Google will now look at the H5 tag for relevance.

The Year Of Schema

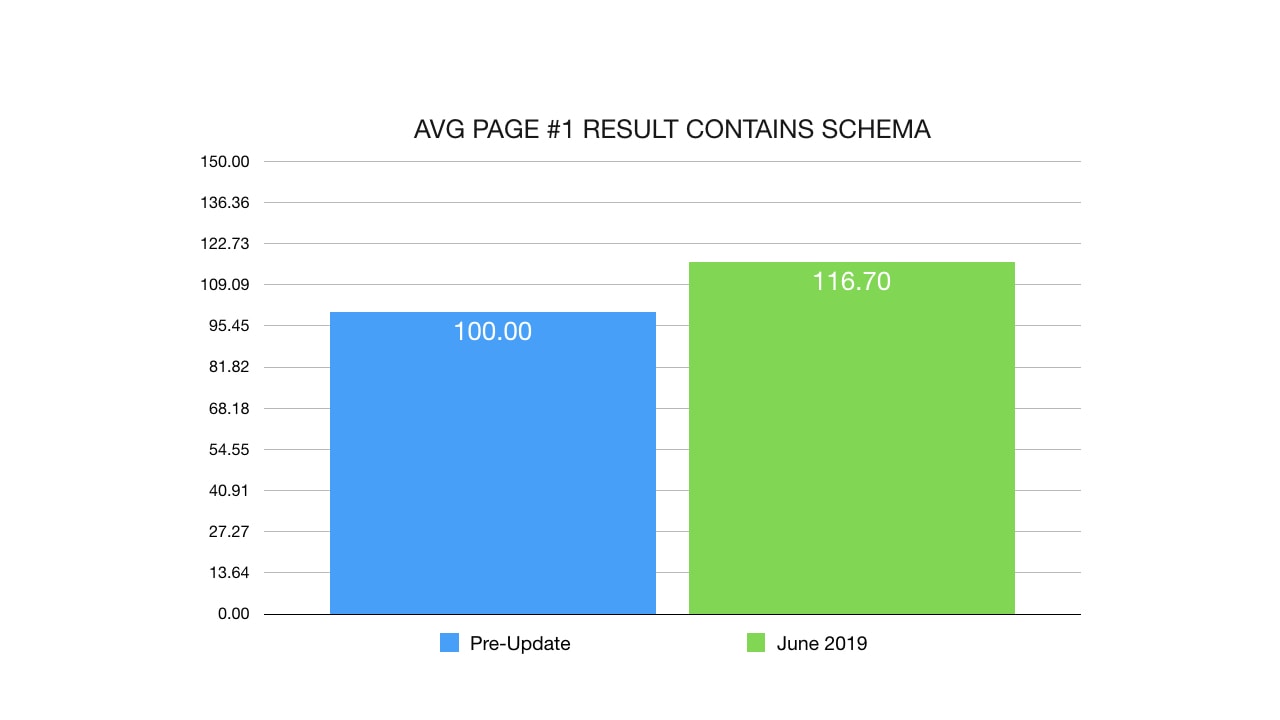

One big surprise is that schema code is everywhere now.

Perhaps this is because of a recent wide adoption of schema by webmasters or maybe Google started favoring pages with schema.

We're at a point where there is specific code for nearly every single type of industry so there is no reason to not include it on your site.

When analyzing the first page, many of the results had some sort of schema code in them.

While the data isn't clear on if this is a driving force or a consequence of schema adoption, I would strongly encourage webmasters to utilize the latest schema code as we saw a 16% increase in schema.

I strongly recommend using the latest schema markup within your page as it might provide an advantage with regards to ranking on Google.

Discussion

Just when it seemed as if Google was moving away from links, they strike hard with this new core update. In a way, it makes perfect sense.

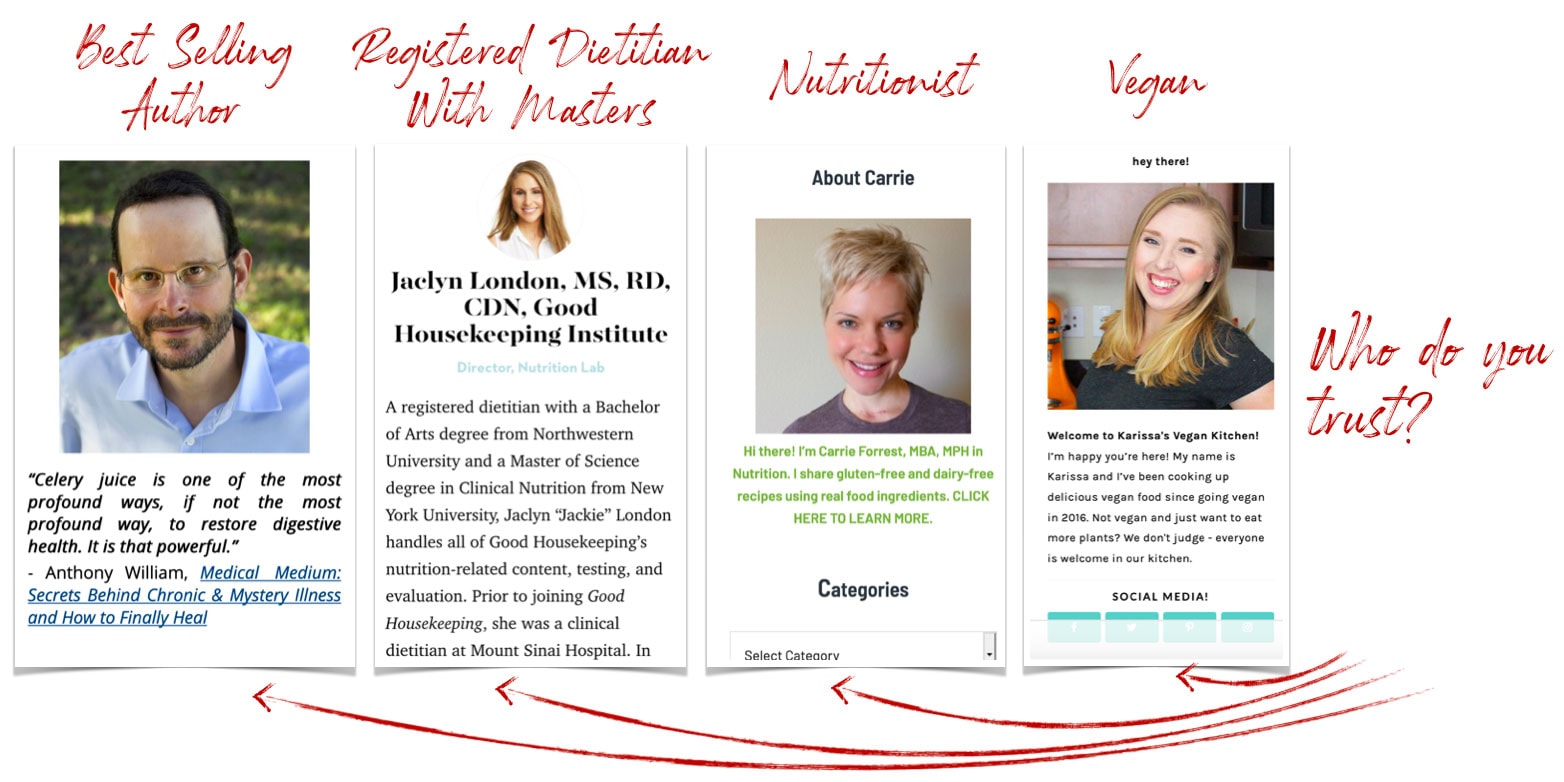

How else can you evaluate what is credible and what is not when everyone is producing similar content?

What makes the medical researcher's website more credible than the mommy blogger when it comes to giving medical advice?

Until artificial intelligence becomes so powerful that it can discern lies, false propaganda and inconsistencies within content, links remain one of the best methods to convey trust.

It appears as if Google is quite happy with updates they have made to their linking algorithm and felt comfortable increasing the impact it has within the core algorithm.

What does this mean for the average webmaster?

First, know that after the June 2019 update, links from domains might (or might not) pass the same amount of trust and authority. When Google updates the way they calculate trust, the same link portfolio can have different effects.

For example, a link portfolio heavy in .edu and .gov links might see a surge while a link portfolio comprised of links from mommy bloggers might see a drop. If you have suffered a drop then the first thing I would ask is:

"Who is linking to me? And are they considered a trusted authority in the world?"

This is who Google trusts, according to June 2019 search engine rankings.

Source: Authors ranking for "Celery Juice" in order from left to right (left higher)

In addition, it appears as if it's not just a single link that makes the difference, and instead, it seems to be the total links pointing to a domain that is the driving force behind the latest traffic fluctuations.

As such, it might be a good idea to look at the overall ratio of links pointing to your site rather than an absolute "I have 3 .edu links, I should be good!"

Mega-Sites With Millions of Links

If links and domain authority are a driving force in rankings, then how could a site such as DailyMail, with hundreds of thousands of links, experience a traffic drop?

The answer to that is that Dailymail is still ranking, and they are still receiving an enormous amount of Google traffic. Even AFTER Metro.co.uk increased in traffic, traffic estimates from Ahrefs show that Dailymail.co.uk is still receiving considerably more traffic overall.

Essentially, this update has been a correction in the search engine landscape.

Sites that were previously receiving more traffic than their domain authority suggested they should have, saw a traffic drop and sites that were receiving significantly less traffic than their authority dictated, saw an increase.

Source: Ahrefs traffic estimates for Dailymail.co.uk and Metro.co.uk

Source: Ahrefs traffic estimates for Dailymail.co.uk and Metro.co.uk

Determining Trust And Authority

What could have Google changed with regards to link trust and domain authority?

For one, if Google is using the seed method to calculate trust, then they could have changed/altered/updated their seed list to better reflect the search engine landscape.

If they aren't using the seed method, they could have increased the weight of certain extensions (such as .edu) but they have denied doing so in the past.

Another theory we've tested is monitoring the traffic flowing through links (or at the very least, going to a page) in order to validate the links on that page. The idea being that you would want a majority of your links to be hosted on pages with traffic while minimizing links on inactive websites.

Of course, Google won't tell us for sure but we've tested a few things that work really well.

Recovering From The June 2019 Core Update

The June 2019 update is unlike the March (and August 2018) update in the sense that it's more of correction rather than an outright penalty. While our March Core Update recovery guides have been working consistently inside our community (with members recovering their full traffic), this latest June update requires a different approach.

Does this mean you can't regain loss traffic? Of course not!

You just have to address it differently.

According to the data, the way go regain lost traffic will be to increase the domain authority of your website to match (or exceed) the competition. This is complicated by the fact that Google has altered the way they evaluate domain authority so while third party metric services are a great window into those statistics, remember that they are only estimates.

For a more consistent (and predictable results), I prefer to use authority building link campaigns that have proven to be successful. I will be covering them in an upcoming webinar.

Closing Thoughts

It's clear that the June 2019 Core algorithm update is unlike the previous Google updates. Google seems to have focused more on domain authority in order to increase the trust of the search results. Perhaps is a reaction to the public pressure to minimize fake stories or dangerous content being spread throughout the internet... or perhaps there are other motives at hand.

What's apparent is that Google has improved their algorithm to better measure domain authority and then increased the weight of it within the algorithm.

This change in the algorithm can be heavily exploited as I will demonstrate in my upcoming webinar on ranking money sites on Google. There are things I can only share in private so if you're interested, join me on inside Traffic Research as I share my biggest discoveries. Update: Webinar has been rescheduled.

Webinar has been rescheduled. Traffic Research members will have access to an upcoming webinar on how to exploit the latest algorithm changes for profit. Specifically, I will be covering how to rank your main "money site", on Google.

Registration is limited to 100 attendees.

Resources Used

Tools, charts and data used in this study:

- Traffic Research in-house data & tools.

- Scrapebox (Tool)

The swiss army knife of SEO.

- Screaming Frog SEO Spyder (Tool)

The most complete website audit software.

- SEOToolLab's Cora (Data & Tool)

The best keyword correlation software.

- Ahrefs (Website and backlink statistics) (Data & Tool)

Amazing link and website analysis software.

- SEMRush (Data & Tool)

Competiting link and website analysis software.

- Majestic (Data & Tool)

Great for returning statistics on large data sets.

- Google Analytics

Amazing affordable (free if you aren't using the enterprise version) statistic software.

- Webmaster World Forums

Where I find all the horror stories of people losing most of their traffic.

- Traffic Research Forums, Emails, Slack

Resource for detailed insights on what webmasters did to recover from algorithm changes.

- Sistrix.com Winners and Losers chart

Amazing blog posts containing winner and losers.

Have feedback?

About The Author

Eric Lancheres is credited with being the first SEO professional to discover the solution to the Panda & Penguin Updates.

In his coaching, Eric has helped dozens of entrepreneurs recover from Google penalties through his SEO consulting and regularly helps businesses raise their profits by 20-75%. Additionally, on his own, he’s created affiliate websites that sometimes generate up to 50,000 in a month, entirely on organic traffic.

These achievements have made him a featured speaker at Traffic & Conversion Summit, SEO Rockstars, & Internet Marketing Party. Although he is no longer taking on clients, he currently coaches and helps people with SEO challenges inside Traffic Research.